How to Improve Your Data Processing Accuracy

There are two primary uses for data processing:

- Internal processing that businesses use for accounting, lead generation, transactions, and so on.

- External processing that data processing companies use to offer data processing as a service.

Both types of data processing suffer from the same plague: data processing quality control.

The problem is that, unlike most processes, you cannot perform quality control at the end, by inspecting the final product. In most cases, it’s impossible to spot incorrect results, unless there’s something that’s wildly incorrect. But, most of the time, incorrect data is indistinguishable from correct data, unless you have the right tools.

Data processing quality control must be performed on the front end, by validating the data before it’s fed into the data processing system.

Data validation is the key to good data processing. It doesn’t matter how good your data processing model is, or how precise your data processing system is if you’re using bad data.

Consider some of the most common data processing services and tasks:

- Data Deduplication

- Forms Processing

- Order Processing

- Student Loan Processing

- Data Mining

- Insurance Claims Processing

- Market Research Forms Processing

- Check Processing

- Credit Card Processing

- Transaction Processing

- Survey Processing

- Litigation Services

- Mailing List Compilation

- Text and Web Data Mining

Now consider what happens when you feed bad data into each one of these processes. Too much bad data essentially makes any data processing service useless.

So, here’s how to verify your data and improve your data processing results.

How to improve your data processing

The first thing to understand is where most data errors come from.

The vast majority of bad data comes from humans. Whenever you have people entering data on forms or writing it down, there are going to be mistakes. It’s inevitable.

We’ve talked about this before. But, removing manual data entry will cut off bad data at the source. Let computers handle, transfer, and copy your data whenever you can. Computers are precise. Humans are not.

However, it’s impossible to remove any and all manual data entry. This is especially true with personal information. People have to enter their information somewhere, no matter what. So, there’s always going to be mistakes to weed out.

That’s where data validation tools come in.

There are two ways to verify large quantities of data: batch processing and data integration.

Batch processing works best for internal data processing, like lead verification and low-volume transaction processing.

Data integration is what you need for big data processing and offering data processing as a service.

Here’s how both work.

Batch data processing

Batch data processing involves some manual work, which is why it’s not great for tasks that require constant, high-volume data processing.

However, it’s perfect for checking contact lists, verifying customer shipping and billing information, verifying leads, and so on. Customer data validation saves you a lot of money and headache from shipping errors, credit card fraud, and sales inefficiency.

The batch data processing steps are simple:

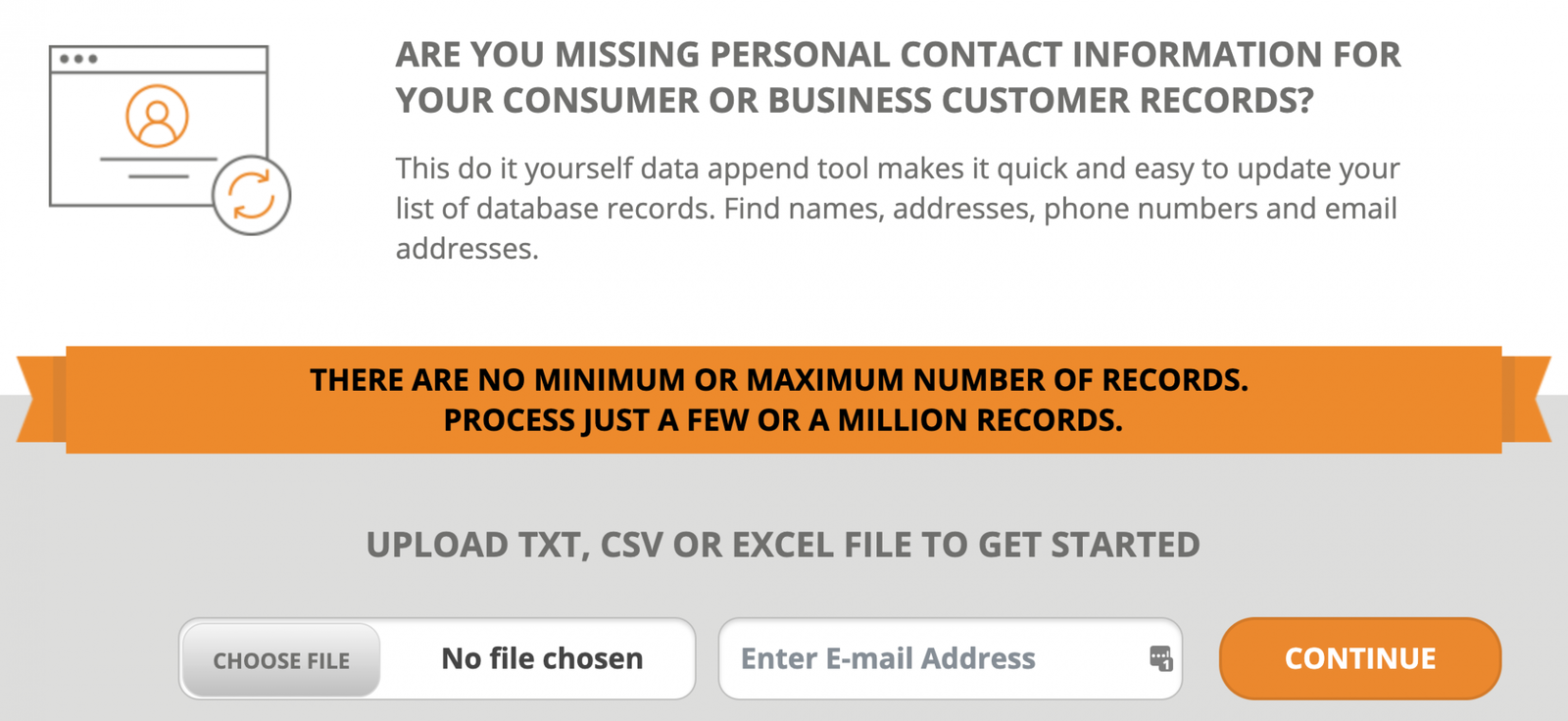

- Export your data as a .csv, .txt, or Excel file. Separate each piece of information into its own column. For personal information, there should be an individual column for first name, last name, street address, city, etc.

- Upload your list for processing. This can be done entirely through your web browser. During the upload process, you’ll select which pieces of information you want to verify and append to the list.

The results are usually returned in a matter of minutes unless you have an extremely large list to process. Your list will be returned as a .csv file. - Reload your list into the system where you use the data—CRM system, lead generation database, fulfillment system…

The key is to perform these steps immediately before you use your data. Personal data becomes outdated fast. Avoid letting your verified data sit for too long before putting it to work. Otherwise, you’ll need to verify it all over again.

Data integration

If you offer data processing as a service, data integration is the way to go. There’s simply too much data that needs to be processed too quickly to upload files manually. You’d probably need a full-time employee just to keep up with the demand.

The best way to validate all your data is to use an API to integrate data verification into your data processing pipeline. That way, you can build data processing tools that automatically send data out for verification before you do anything with it. The data cleaning process will be transparent and completely hands-free.

Now, there will be at least a little bit of development work to complete the data integration, especially if you’re doing data processing with Python or using a proprietary system that you built.

However, in most cases the development is minimal. The API integrates with a unique URL. There’s no API key to deal with. And, no third-party software to integrate.

Additionally, data validation will more than cover the cost of paying your development team to perform the integration. You can add the data validation to your data processing agreement and raise your prices accordingly.

Data validation also reduces strain on your customer service teams. You’ll deliver more accurate results, which is a better customer experience and reduces complaints.

Really, if you’re not validating data as part of your data processing service, you’re missing out on a lot of potential revenue and operating far less efficiently than you should be. That’s the bottom line.

Checking your work

If you use data for business, you need to validate it. There’s just no other way to eliminate the errors that cost money and time and erode your customer experience.

If you need dependable data, check out the Searchbug batch services or our data validation APIs.

Want great articles like this sent straight to your inbox?

[boxzilla_link box=”10596″]Click here to subscribe to our blog.[/boxzilla_link]